[ad_1]

East Japan Railway Co. has suspended a program to use facial recognition to track ex-convicts who turn up at its stations.

A company official said Sept. 21 the decision was made “because a social consensus has not yet been reached” on the issue.

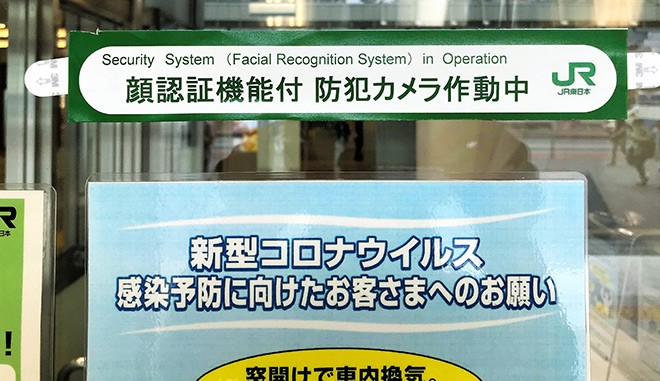

JR East had announced on July 6 that it was beginning operations of a network of 8,359 security cameras at 110 of its major stations as well as power substations as a safety measure for the Tokyo Olympics and Paralympics.

But the company never revealed the details about what types of individuals would be monitored.

It now turns out that one element of the security package was to use facial recognition technology to automatically track former convicts or parolees who had at one time been suspected of plotting terrorist acts at train stations.

The photos of individuals arrested on such suspicions would have been inputted into JR East’s computer system, and the security cameras would have followed them if it detected their presence at a station. Station employees would also have been allowed to question them and even check their belongings.

Company officials conceded, however, that as of September, no photos of any former convicts or parolees had been inputted into the security program.

JR East decided to suspend the program after The Yomiuri Shimbun reported on Sept. 21 that the facial recognition system was designed to detect certain ex-convicts.

Other Japan Railway companies do not currently use security cameras with facial recognition technology at their stations.

JR East officials said they had received the consent of the Personal Information Protection Commission (PIPC) to proceed with the plan.

Suspects on police wanted lists and individuals acting in a suspicious manner at stations would also be targeted by the facial recognition system, company officials added.

The government has a program that notifies victims when the perpetrator of the crimes against them has left prison after completing their sentence or been released on parole.

JR East officials explained that the company had a right to be informed about ex-convicts or parolees who at one time were suspected of major crimes against the company.

Kiyoshi Sawaki, a deputy secretary-general at PIPC, said the Personal Information Protection Law sought a balance between protecting and utilizing information obtained through facial recognition technology and that there was no imbalance in the JR East case because the main purpose of using the information was crime prevention.

But the plan runs counter to moves abroad regarding facial recognition data. The European Union in April announced it was banning police from acquiring facial recognition data. There are also state and municipal governments in the United States that have implemented similar bans.

Experts in Japan are divided over the use of facial recognition technology.

Yusaku Fujii, a professor of safety engineering at Gunma University, said there was a need to determine social rules regarding such issues as the privacy of ex-convicts and parolees who are trying for a second chance in society. But he added that using facial recognition technology was likely unavoidable to secure the public’s safety.

Hiroshi Miyashita, a professor of constitutional law at Chuo University, said a private-sector company such as JR East should never be allowed to register and use facial information about those who have served prison terms.

“Even if crime prevention is the main objective for acquiring and using such data, it should be limited only to cases of high urgency,” Miyashita said.

(This article was written by Takashi Ogawa and Yasukazu Akada.)

[ad_2]

Source link